Petabyte-scale Cloud Migration (AWS to GCP)

L*VIS, 2022

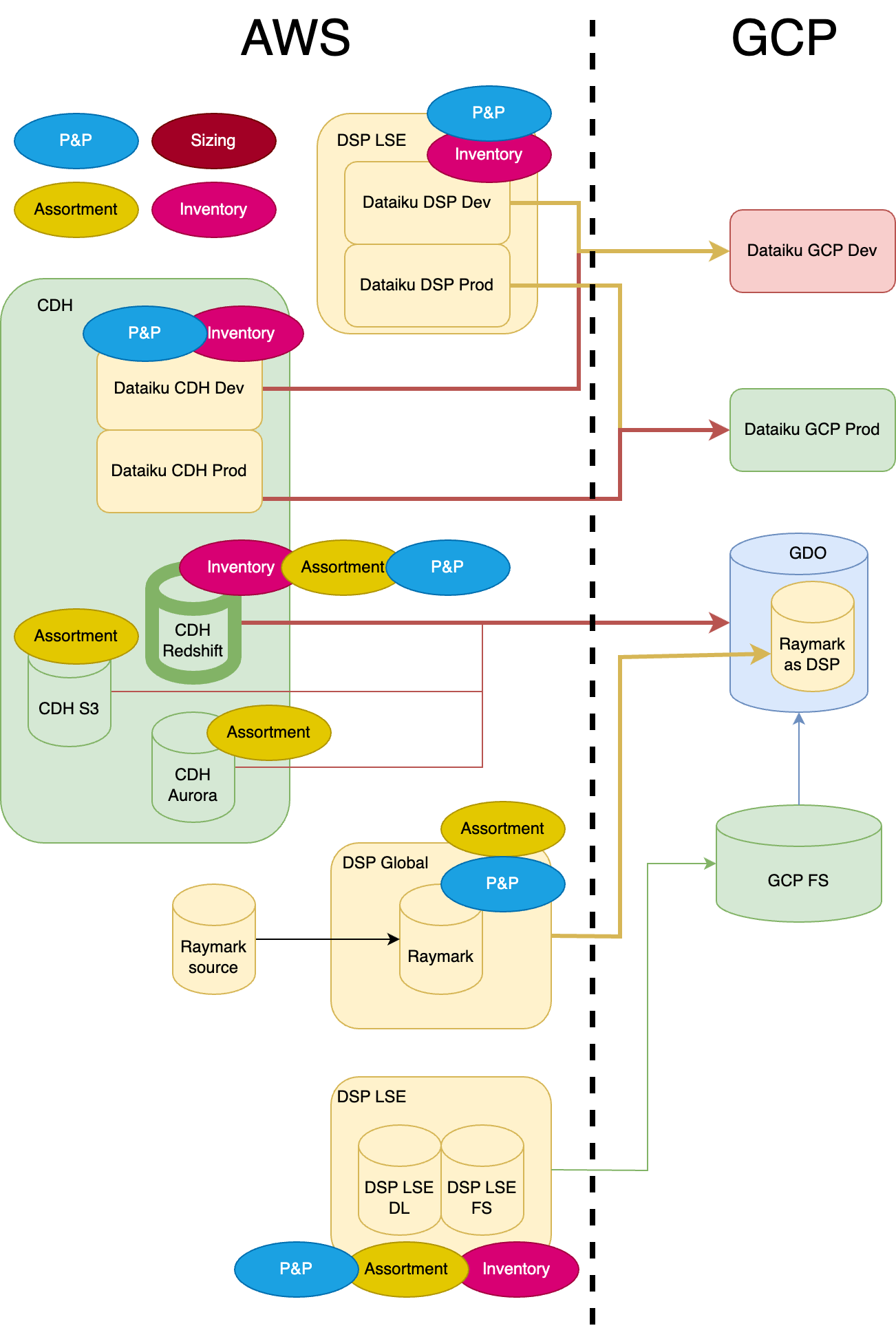

Background (Why)

In 2022, L*vis Chief Digital Officer initiated a strategic shift to migrate the company’s petabyte-scale cloud infrastructure and data ecosystems from AWS to Google Cloud Platform (GCP). This decision was driven bylong-term commercial and competitive risks

: reliance on AWS (owned by Amazon, a retail and tech competitor) posed a vulnerability to L*vis customer data sovereignty and financial independence.What Was Delivered

As Lead Data Engineer for Europe, I directed the end-to-end migration of core data ecosystems, including foundational pipelines, BI reporting tools, and data science platforms. Scope covered:1. Data Engineering:

Replatforming of 200+ ETL pipelines and orchestration workflows.2. BI & Analytics:

Migration of 15+ regional data marts and Tableau/Power BI reporting tools.3. Data Science:

Transition of ML models and deprecation of legacy tools (e.g., Dataiku).Impact & KPIs

1. Accelerated timeline:

Completed full migration in8 months

(33% faster than the 12-month plan).2. Cost efficiency:

Saved$1.5M annually

by retiring Dataiku licenses and consolidating tooling.3. Zero business disruption:

Maintained 99.9% system availability during migration.4. Scalability:

Enabled 40% faster data processing post-GCP optimization.General Migration Approaches

1. Lift-and-Shift

What:

Replicate legacy systems “as-is” to the new cloud without much redesign.When:

Tight deadlines, stable systems, stakeholders aligned speed over perfection.2. Optimize First

What:

Modernize systems (naming convention, best practices, etc.) on the old cloud before migrating.When:

Broken legacy systems, long-term focus.3. Hybrid Model

What:

Migrate first, retire high-cost tools mid-process, and optimize post-migration.When:

Need quick wins, mixed quality on Legacy systems, difficult stakeholders.Lift-and-Shift

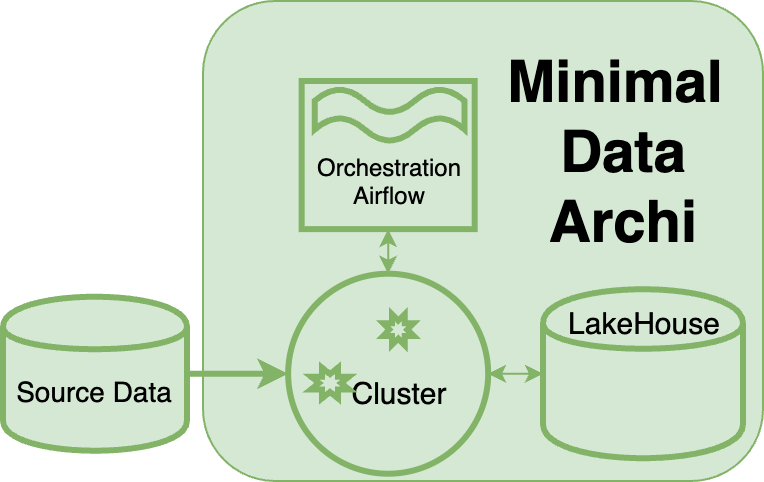

approach, leveraging theMinimal Data Architecture

andProd-Only Data Lineage

principles to prioritize speed and cost-efficiency, ensuring a swift response to our retail business's urgent needs during the pandemic.How It Was Executed

L*vis Lift-and-Shift Cloud Migration Steps

1, Cross-Functional Alignment:

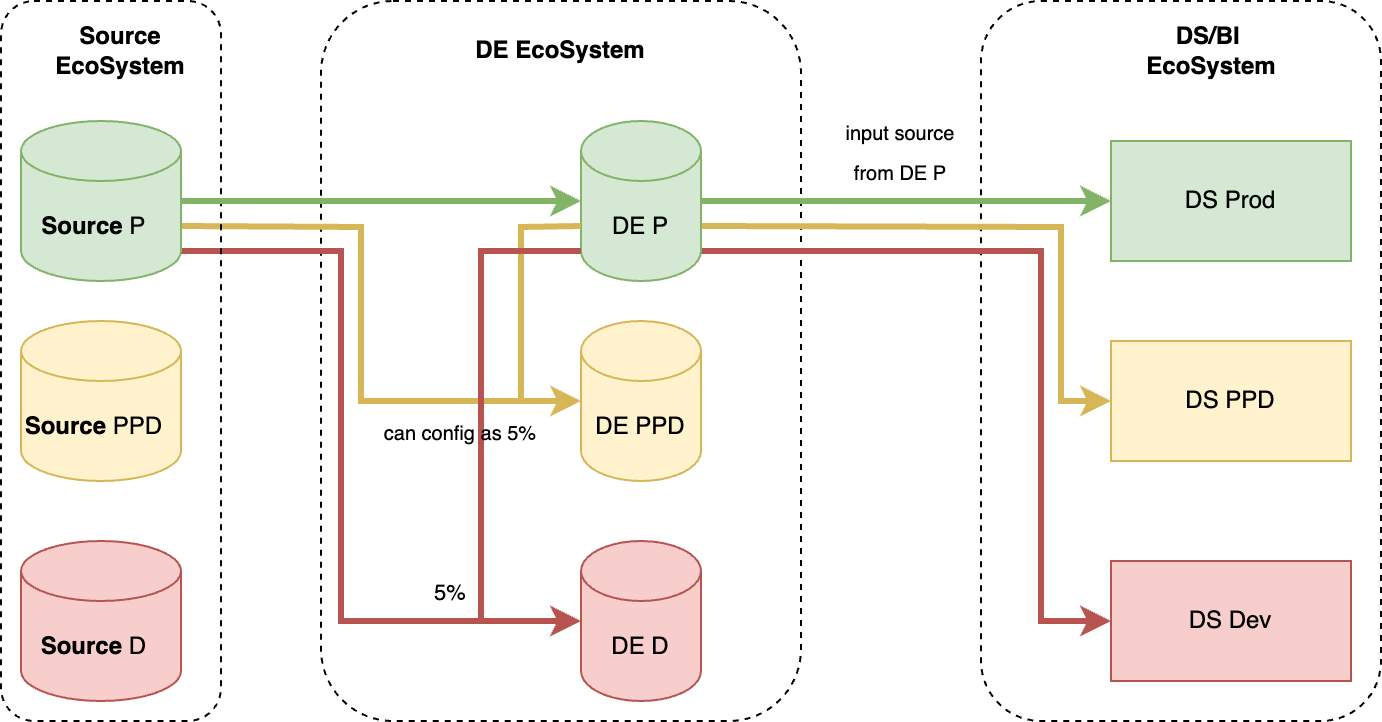

Partnered with data science, analytics, platform, security, and business teams to align priorities and timelines, and ensure minimal downtime.2, Phased Migration Strategy:

Designed a phased migration, prioritizing low-risk, high-cost systems (e.g. Dataiku, CDL) first. Mapped ingress sources, Lift & Shift SQL-based business logic, and delivered early wins to build stakeholder confidence.3, Automated Validation Framework:

Implemented automated checks with aligned acceptance principles (e.g., 5% tolerance thresholds) to ensure data integrity and accelerate stakeholder validation.4, Continuous Optimization:

Established a prioritized backlog to address technical debt, applying naming conventions, coding best practices, and iterative improvements post-migration.Ingress Source Mapping (Most Important)

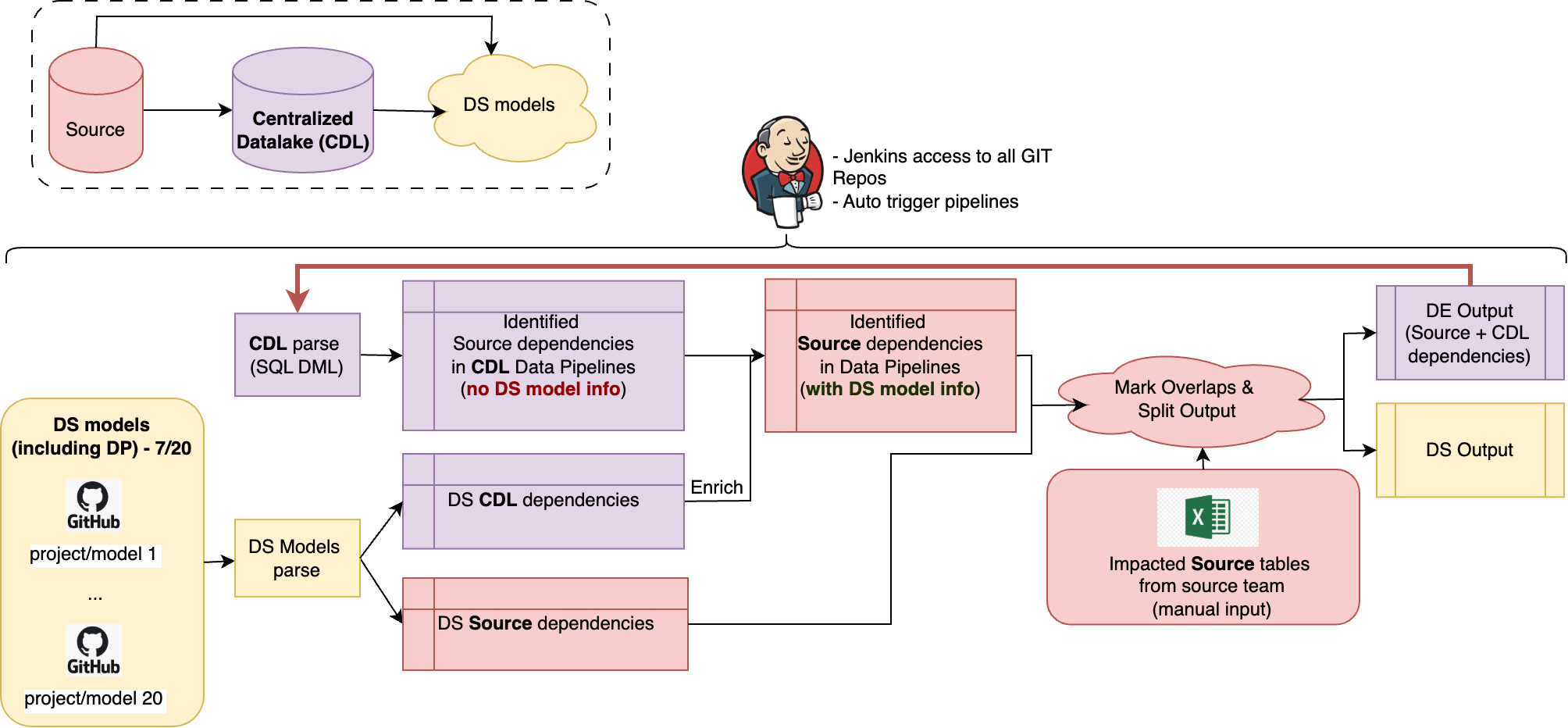

Accurate source mapping

is foundational to lift-and-shift migrations, ensuring column-level fidelity preserves critical transformation logic. The rest is execution. - Speed is maximized when source data remains structurally consistent (tables, columns, row volumes). - Otherwise, the process begins with cross-team collaboration for ingress validation, accelerated by automated identification of source data dependencies at the table and column levels, along with automated validation frameworks.Transformation as Business Logic (Most Challenging)

1, Undocumented Code Complexity:

Legacy transformations often lack inline comments, documentation, or version history, turning codebases into “black boxes.”2, Knowledge Gaps:

Critical tribal knowledge exits with departed colleagues, leaving gaps in understanding dependencies (e.g., why Column X was excluded from a revenue calculation).3, Validation Complexity:

Legacy outputs lack clear benchmarks, making it difficult to confirm migrated logic’s accuracy at scale.L*vis Lift-and-Shift: Stability First, Optimization Later

L*vis adopted a Lift and Shift approach to minimize transformation during migration, ensuring business logic remained intact while avoiding disruptions. This enabled a faster transition to the cloud, with optimizations planned post-migration to systematically remove technical debt.Egress Output Validation (Most Time-Consuming)

Key Mitigations

1, Automated Comparison Frameworks:

Reduce manual effort with scripts to validate outputs at scale.2, Pre-Aligned Acceptance Criteria:

Define thresholds (e.g., 5% variance) and priorities (e.g. Recent & Active Data Migrate First ) upfront to streamline decisions.Migration Examples

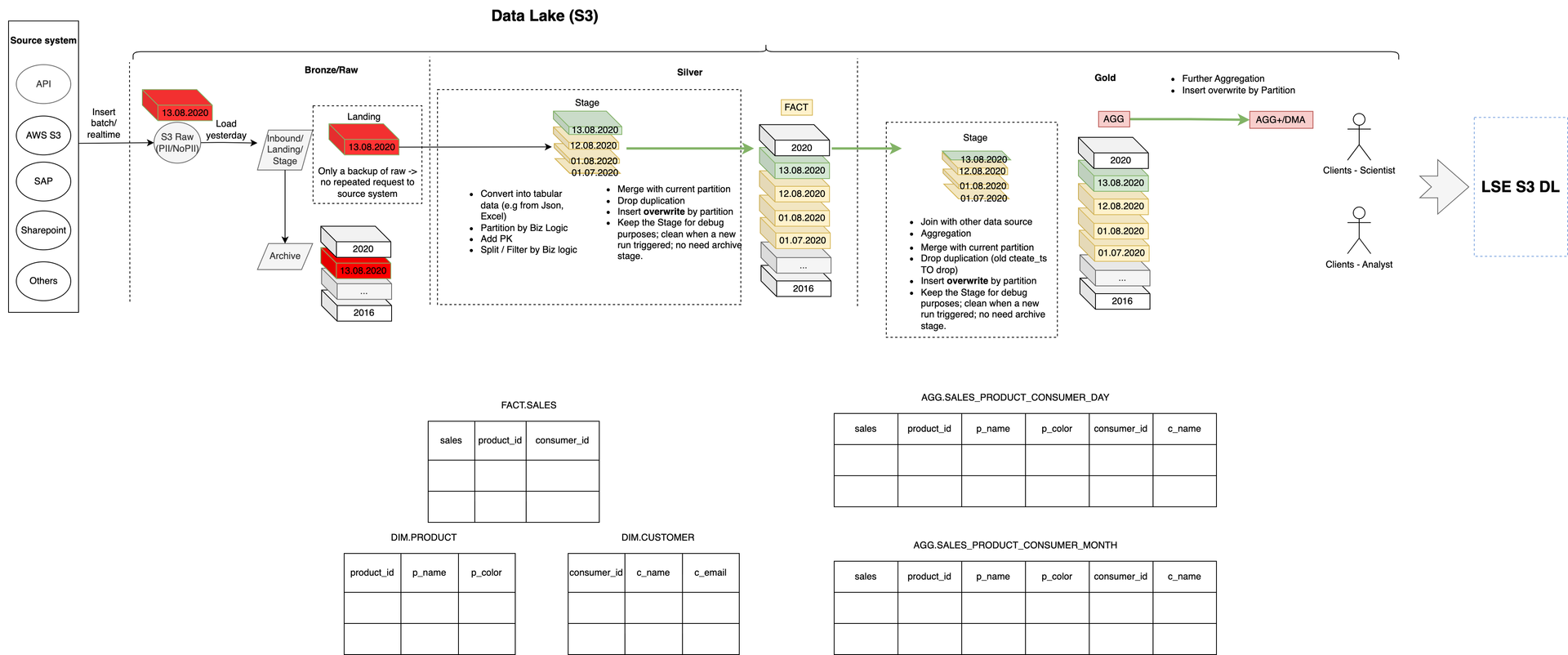

Minimal Data Architecture: A Simplified, Flexible Ecosystem

A streamlined data architecture with only storage, Spark cluster computation, and orchestration ensures adaptability across platforms. This principle accelerated migration by enabling 1:1 mapping between ecosystems (e.g., GCP GCS ↔ AWS S3, AWS EC2 ↔ GCP EC2), simplifying transitions and reducing complexity.

Many leading companies, including Netflix, H&M, Uber, Airbnb, Shopify, Spotify, and Zalando, adopt Minimal Data Architecture to enhance agility, cost efficiency, and cross-platform adaptability. By leveraging a streamlined stack with storage, Spark-based computation, and orchestration, they simplify migrations, ensure scalability, and reduce complexity across cloud environments.

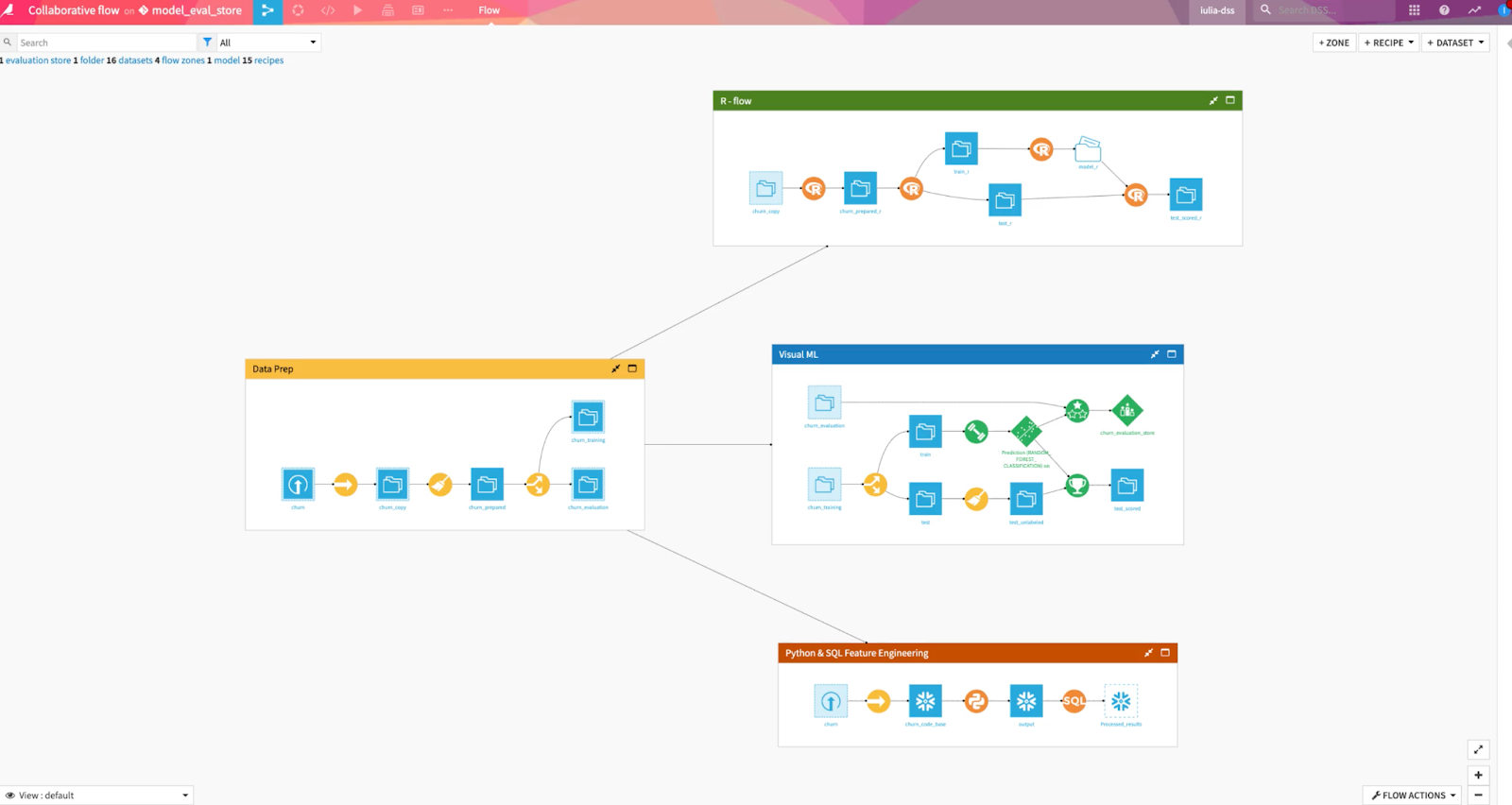

Dataiku Decommissioned

Completed migration, validation, and decommissioning in just 3 months with my DE team, saving $1.5M annually by retiring Dataiku licenses and consolidating tooling.

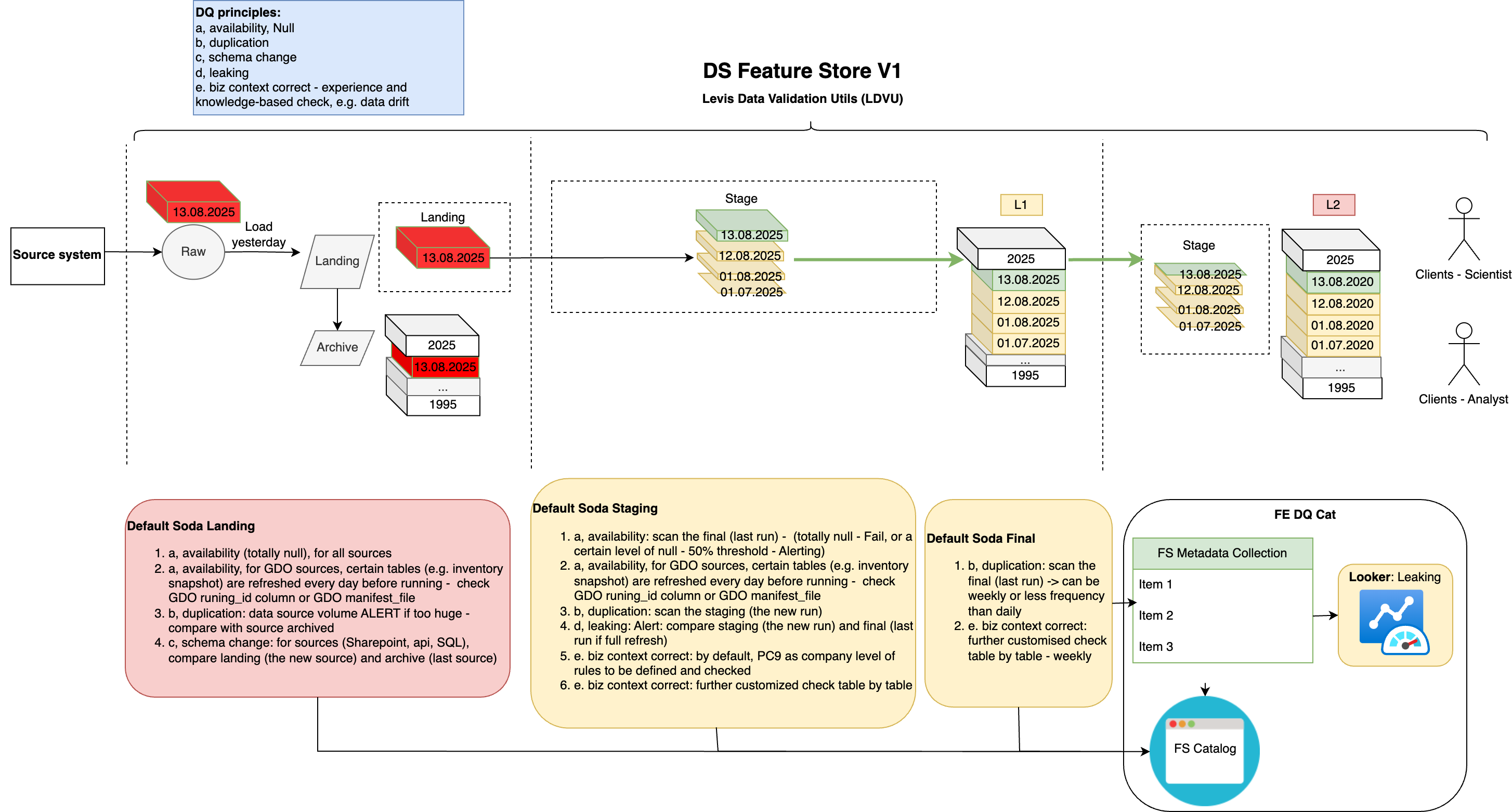

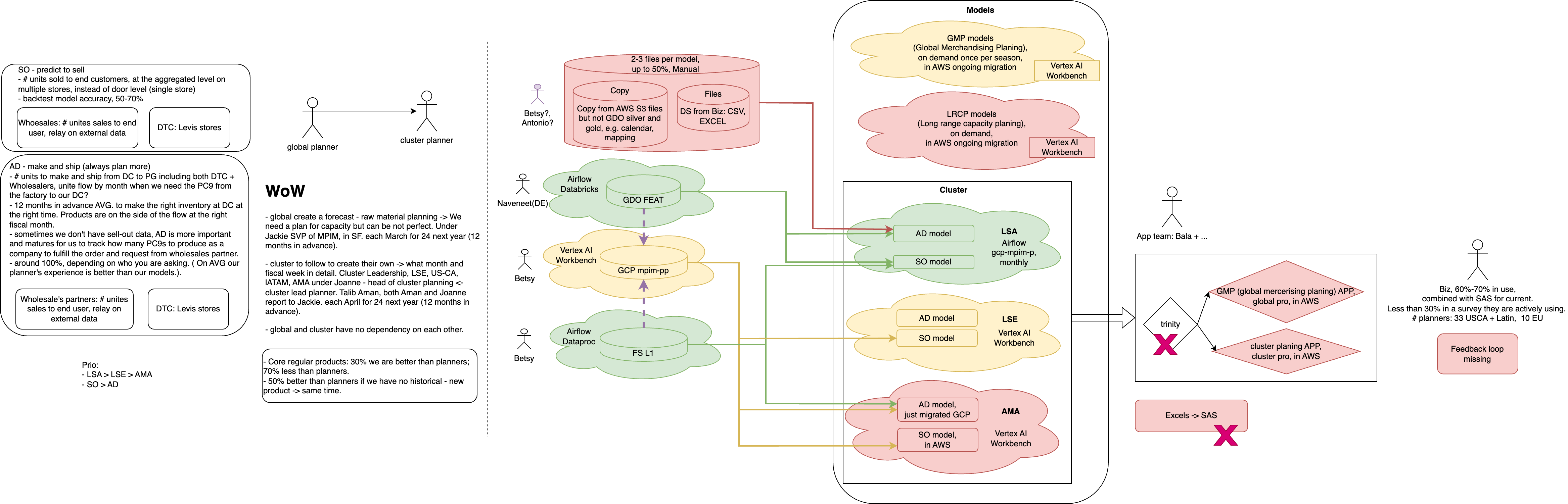

Data Science Use Cases AWS->GCP Migrations

Collaborated closely with data science and business teams (inventory, marketing, finance, etc.) to support Data Science Use Cases—including Price & Promo, Assortment, Inventory Management, and Sizing—ensuring their successful migration across the retail fashion value chain.

Data Science "Trinity" Use Case Global Integration

This showcases the complexity of our migration journey, involving data engineering, data science, and analytics across multiple countries. 'Trinity' was originally outside my team’s scope, but slow progress and lack of the right expertise led to escalating costs, forcing Levi’s to maintain both AWS and GCP ecosystems just for them. My team was brought in to resolve the challenges, swiftly tackling technical blockers and completing the migration in just three months.